Story

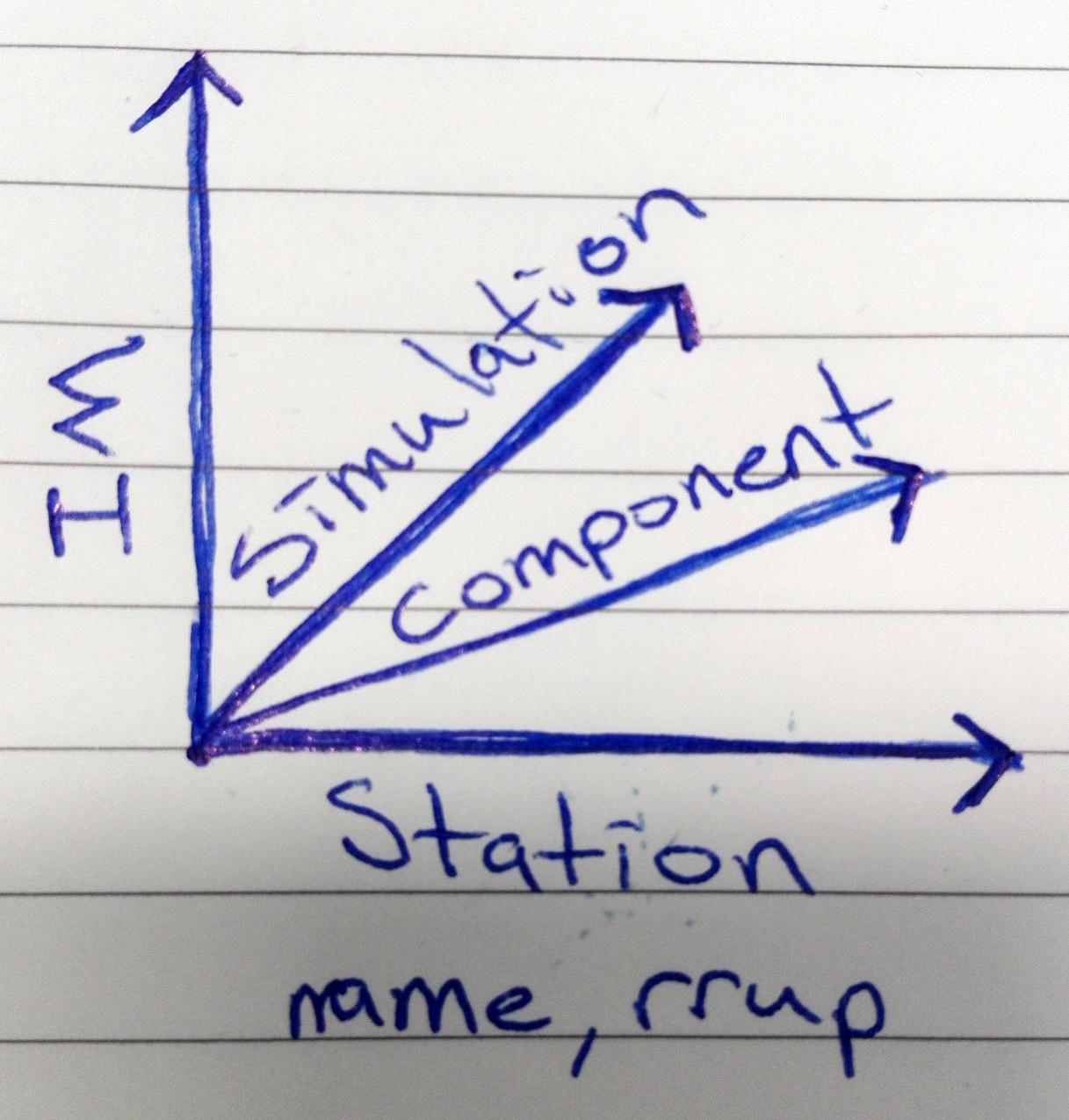

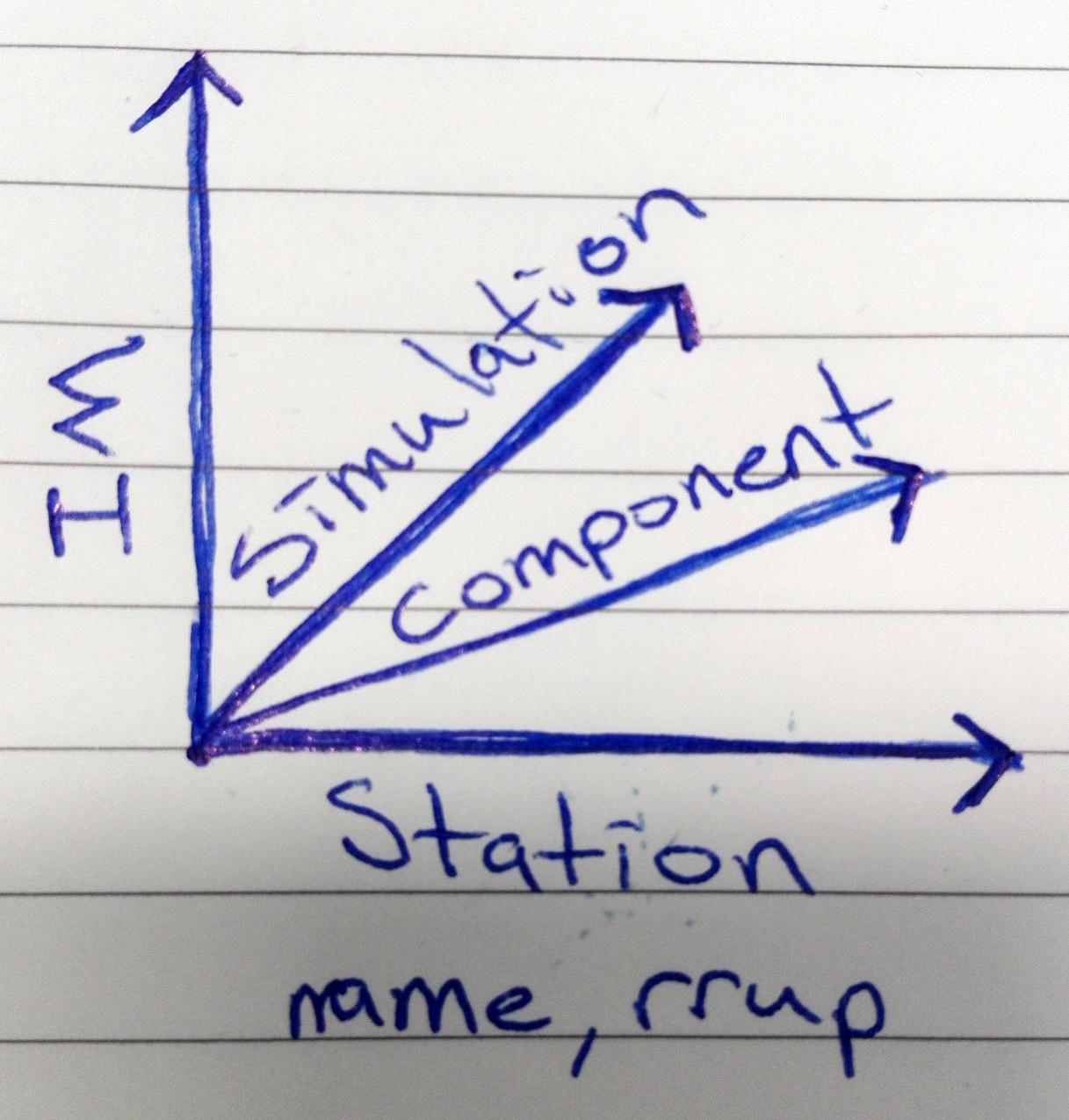

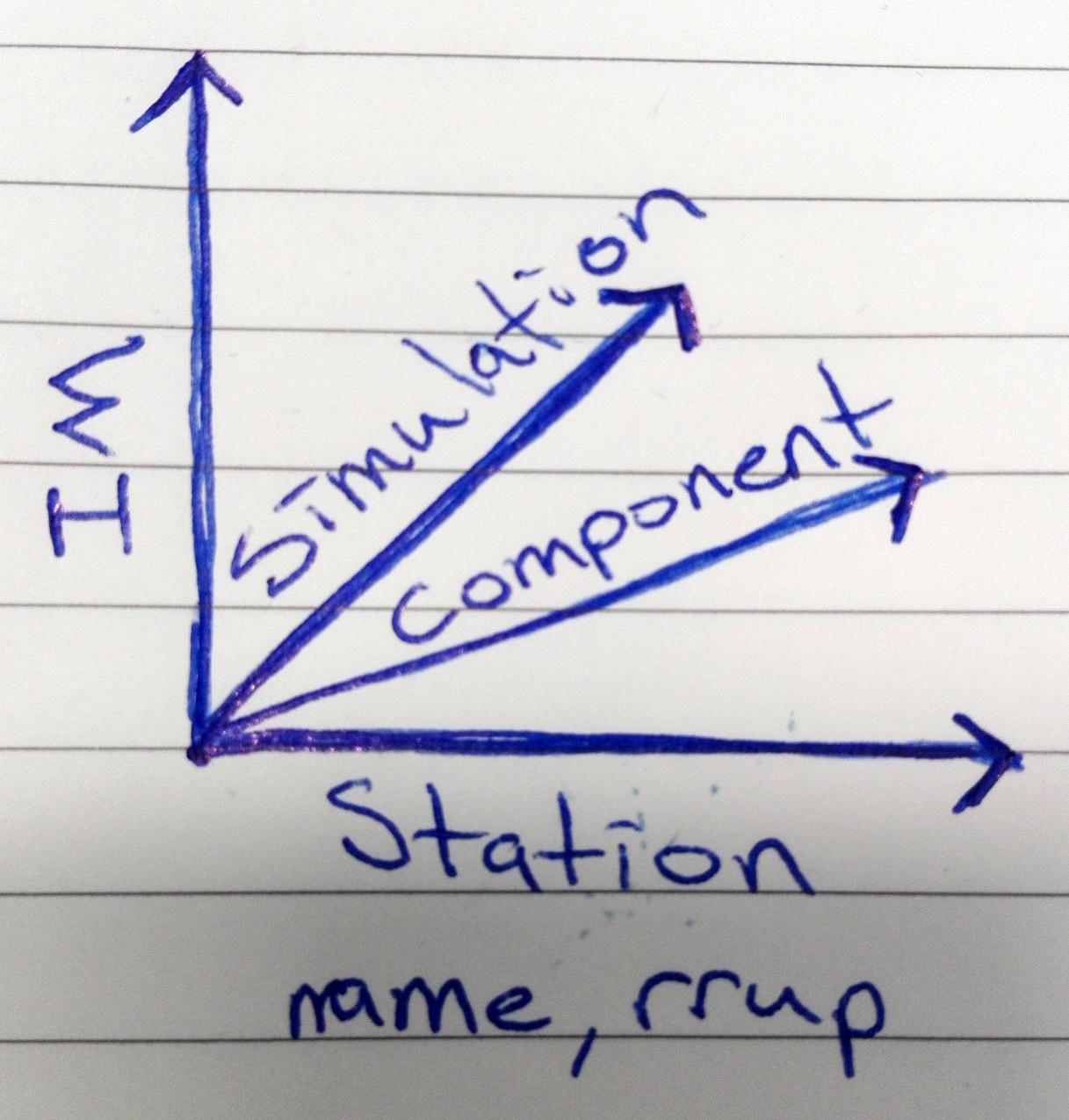

given a station, conditioning IM / value, and miscellaneous parameters with defaults where applicable: get an output similar to what the Matlab / first version of Python code produces.

Tasks

- [4h] function that reads across all IM csvs and for a given station returns IMs for different simulations. Consideration in design taken into account for how the data is used below.

- [6h] adjust Python code in gm_selection repo to accept data in new format, remove dependencies on matlab data files.

- [2h] make a function that can complete all steps required in one go so all you have to give is station name, conditioning im name/value... where possible, default values are provided.

- [3h] given the 2 main step process, allow going from one to the other without an intermediate text file (can be left as optional for now).

- [3h] repeat above for final results, store results in easier to parse format or as variables, original text format left as optional.

total 18h ~3days

IM Data

Has 4 dimensions, stored across CSV files, have to manually combine the dimensions by:

- adding multiple CSV files to make another dimention

- splitting a dimension by a column value (component)

The above needs to be fixed.

Results

All tasks complete.

Hurdles/Changes/Summary

- xarray which was going to be used for storage was not fit for purpose as many to many relationship resulted in wasted space across multiple dimensions.

- sqlite database had to be implemented to combat above issue, cs18p6 resulted in about 24GiB of space (1.5hrs) which takes ~1min to retrieve all IMs/GMs for quite a common station.

- no intermediate file (mainly repeated inputs from first step anyway), just pass a few arrays to the second step.

- text file at the end kept for now but simpler/minimal/more elegant as end users may not be comfortable using alternatives.

Considerations

- DB retrieval for station slow (~1min, cs18p6), should internally loop im_levels/values to prevent re-querying when plugging into seisfinder. Alternatively retrieve more data at once.

- Want to use DB for other things? Multiprocessing to load CSV files (bottleneck), store more data (station rrup, lon/lat, realisations vs fault etc.).

Replacing IM_agg CSV files

IMDB already contains the data in CSV files so instead of reading CSV files, we get the data from IMDB making the IM_agg data obsolete.

Timing results (Mahuika)

- Using IM_agg CSV files to retrieve 1 IM for 1 station: ~80 seconds

- Using IMDB to cache all IMs (22) for 1 station: ~60 seconds

- Using IMDB to cache 1 IM for 1 station: ~50 seconds

- Loading IMDB cache: < 1 second