NeSI Maui/Mahuika

| Maui | Mahuika | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Model | Cray XC50 | Cray CS400 | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Number of CPUs | 18,650x2.4Ghz Skylake (1node = 80 virtual cores) | 8,424 x 2.1GHz Broadwell (1node = 72 virtual cores) | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Total Memory | 66.8Tb | 30 Tb | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Scheduler | SLURM | SLURM | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Max num of submission per user |

Max CPU request: 240 nodes = 9,600 phy.= 19,200 virt. cores Max Node Hours : 1200 node-hours eg.) requesting 240 nodes means wall clock limited to 5 hours. Max num of jobs (submit): 1000 |

Max CPU request: 576 CPUs (8 full nodes) Max num of jobs (submit): 1000 Max Core hours per job: 20,000 hrs. | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Dev env. | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| File system | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Gotchas | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Useful command | Fairshare score: nn_corehour_usage nesi00213 eg. 0.336420 out of 1.0 | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

TACC Stampede2

| Stampede2 (TACC) | |||||||||||||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Model | Dell PowerEdge C6320P/C6420 | ||||||||||||||||||||||||||||||||||||||||||||

| Number of CPUs | 367,024 Xeon Phi 7250 68C 1.4GHz | ||||||||||||||||||||||||||||||||||||||||||||

| Total Memory | 736Tb | ||||||||||||||||||||||||||||||||||||||||||||

| Scheduler | SLURM | ||||||||||||||||||||||||||||||||||||||||||||

| Max num of submission per user | KNL: 1 node 68 cores (1 socket) = 272 hyper threads BUT 64-68MPI tasks advisable * 4200 KNL nodes (96Gb+16Gb)/node SKX: 1 nodes 48 cores (= 2 sockets* 24 cores/socket) = 96 hyper threads * 1,736 nodes

SKX is slightly more expensive than KNL | ||||||||||||||||||||||||||||||||||||||||||||

| Dev env. | Default compiler: Intel 18. | ||||||||||||||||||||||||||||||||||||||||||||

| File system | $HOME: 10Gb (200,000 files) $SCRATCH: unlimited. nobackup, deleted if not accessed for 10 day. /nesi/project/nesi00213 == $HOME/project /nesi/nobackup/nesi00213 == $HOME/nobackup or $SCRATCH/nobackp | ||||||||||||||||||||||||||||||||||||||||||||

| Gotchas | BuildingIntel module add fftw3/3.3.8 intel/18.0.2 impi/18.0.2 cmake/3.10.2 MPI_C_LIB_NAMES = mpifort;mpi;mpigi;dl;rt;pthread MPI_dl_LIBRARY = /usr/lib64/libdl.so MPI_pthread_LIBRARY = /usr/lib64/libthread.so MPI_rt_LIBRARY = /usr/lib64/librt.so By default gcc-6.5 creeps in and it attempts to build with gcc-6.5 instead of icc. Enforce it with CC=icc. I found "make VERBOSE=1" extremely useful to debug building issues GCC

Issueemod3d has a rounding error issue with icc and returns wrong "ny" failing post-emod3d test. Rob Graves fixed this by converting float to double in the function get_n1n2() in misc.c. The fix is included in 3.0.6 (On Nurion, however, this fix was found to be not enough) RunningProject name must be CamelCase: DesignSafe-Graves Slurm script needs -N for number of nodes #SBATCH -N 4 Instead of "srun" it uses "ibrun" WorkflowA number of hardcoded bits assuming NeSI machine need to be updated. Check workflow and qcore "stampede" branches. | ||||||||||||||||||||||||||||||||||||||||||||

| Usage check | (python3_stampede) sungbae@stampede21(1):~$ /usr/local/etc/taccinfo |

KISTI Nurion

| Nurion (KISTI) | |||||||||||||||||||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Model | Cray CS500 | ||||||||||||||||||||||||||||||||||||||||||||||||||

| Number of CPUs | 570,020 Xeon Phi 7250 68C 1.4Ghz | ||||||||||||||||||||||||||||||||||||||||||||||||||

| Total Memory | |||||||||||||||||||||||||||||||||||||||||||||||||||

| Scheduler | PBS | ||||||||||||||||||||||||||||||||||||||||||||||||||

| Max num of submission per user | KNL: 1 node 68 cores (1 socket) * 8305 nodes (96Gb+16Gb)/node SKL: 1 node 40 cores (2 sockets * 20 cores/socket) * 132 nodes (192Gb/node)

| ||||||||||||||||||||||||||||||||||||||||||||||||||

| Dev env. | |||||||||||||||||||||||||||||||||||||||||||||||||||

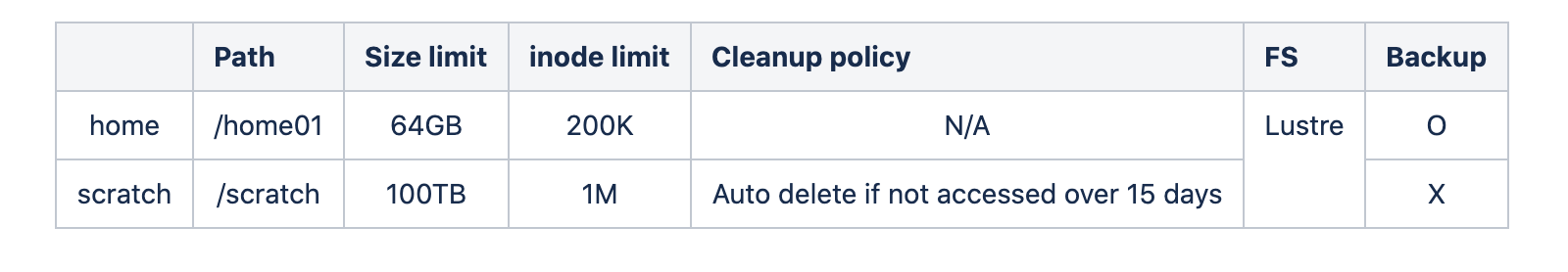

| File system | |||||||||||||||||||||||||||||||||||||||||||||||||||

| Gotchas | Building EMOD3D was somewhat tricky. I ended up having my own version of CMake 3.9 (existing module has no ccmake, and later versions of CMake are buggy), and fftw3 (existing module didn't have fftw3f, and CMake failed to pick up.

The following modules are used. craype-network-opa gcc craype-mic-knl mvapich2 mvapich2 is required as mpi4py doesn't seem to work properly with openmpi Don't bother with fftw3 module. We need to build fftw3 from scratch: only fftw3f (single) version is needed. FFTW3 export MPICC='mpicc -fPIC -march=knl' export CC='gcc -fPIC -march=knl' ./configure --enable-float --enable-sse --enable-threads --host=x86_64-pc-linux --enable-shared --prefix=/home01/hpc11a02/gmsim/Environments/nurion/ROOT/local/gnu make all install EMOD3D

cmake --build . --target all -j 8GMT Prerequisite

Except for GDAL, this works: $HOME=/home01/x2319a02

For GDAL, module add netcdf

(Edit: I had to manually add CPPFLAGS into config.status (2022/11/25) For GMT, go to build

"qsub" MUST be executed in $SCRATCH directory. | ||||||||||||||||||||||||||||||||||||||||||||||||||

| Usage check | isam $ lfs quota -h /home01 $ lfs quota -h /scratch 1 gujwa = KNL 6,400 node time (100 SRU time) = 435,000 core hours XXX sec * 4350/3600 = core hours |

For details of PBS, see PBS page.