This task aims to create a new script to create an emp DS IMDB using the OQ's models that are capable of vectorization to perform better.

A script called openquake_wrapper_vectorized.py is designed to work with vectorized OQ models.

The script can handle the following IMs:

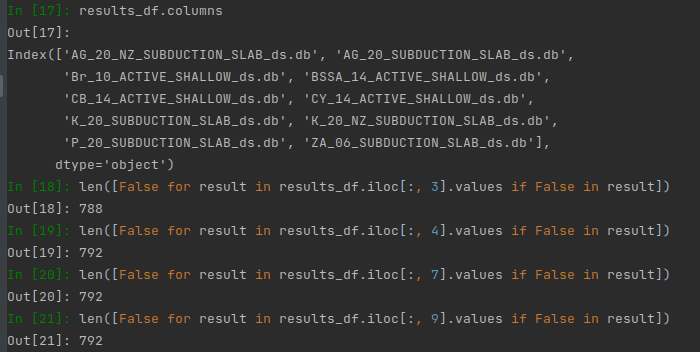

and support the following OQ Models - Green: working fine, Orange - Need some checks

- AG_20

- AG_20_NZ

- ASK_14

- Br_10

- BSSA_14

- CB_14

- CY_14

- K_20

- K_20_NZ

- P_20

- ZA_06

Some models needed some changes to work within a script called new_calc_emp_ds.py

Ideally, we were looking for the outputs from the original script, calc_emp_ds and a new script, new_calc_emp_ds to be identical.(Or reasonably close enough with np.isclose())

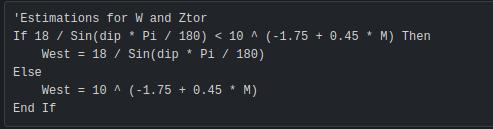

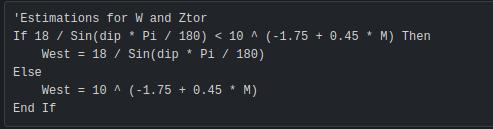

1. ASK_14 - Need to checkASK_14 model requires us to provide a width parameter, and we do not have width information. However, we managed to find an equation that estimates a width for us, which is from NGA-West 2 spreadsheet.

This equation is not included in the ASK_14 paper, so we cannot justify proposing these changes to OpenQuake, but we can use this within our OQ wrapper to estimate a width. |

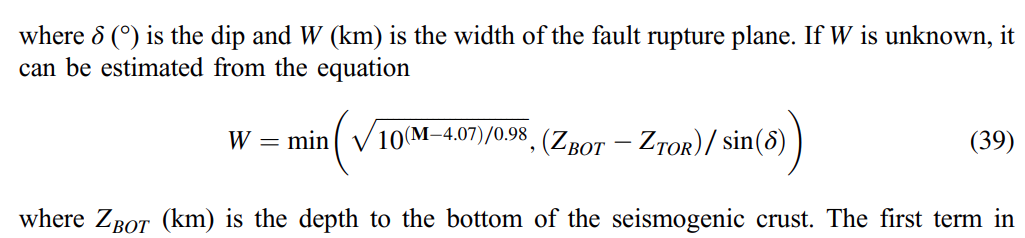

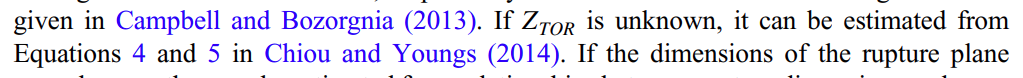

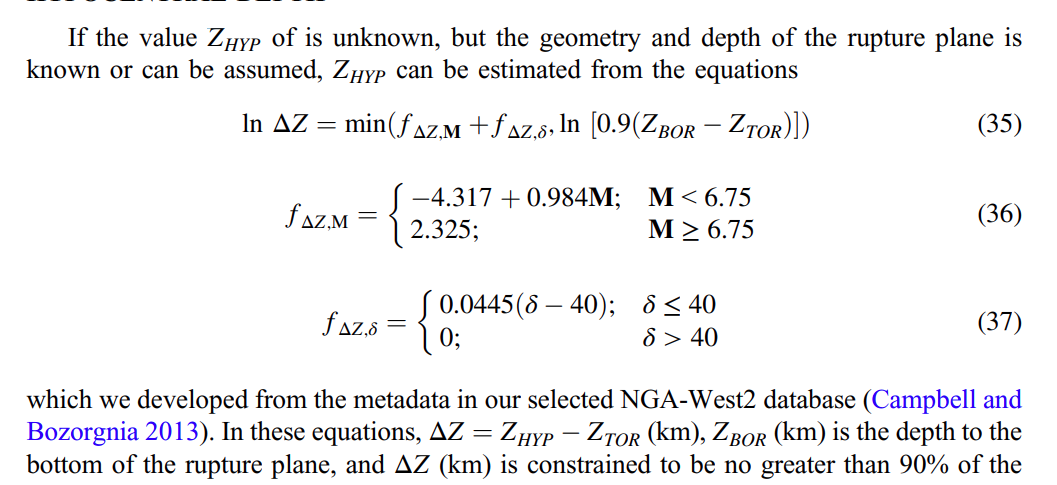

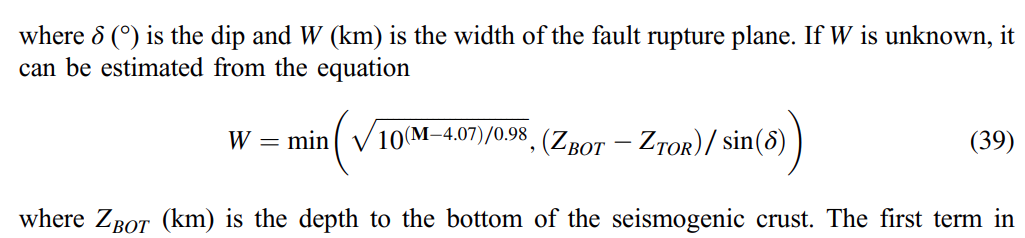

2. CB_14 - Need to checkCB_14 model also requires us to provide width, but unlike ASK_14, the CB_14 paper includes how to estimate the width.

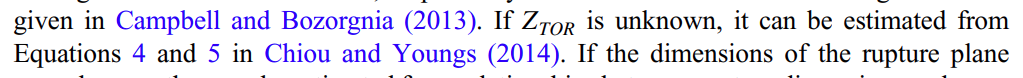

On top of the width estimation, I also implemented an estimation of Ztor and Hypo Depth in the paper.

Hence, I opened a PR for Ztor estimation on OQ first, but we do not know what will happen. Therefore, the alternative way of using these estimations is to implement these within our wrapper along with ASK_14's width estimation. |

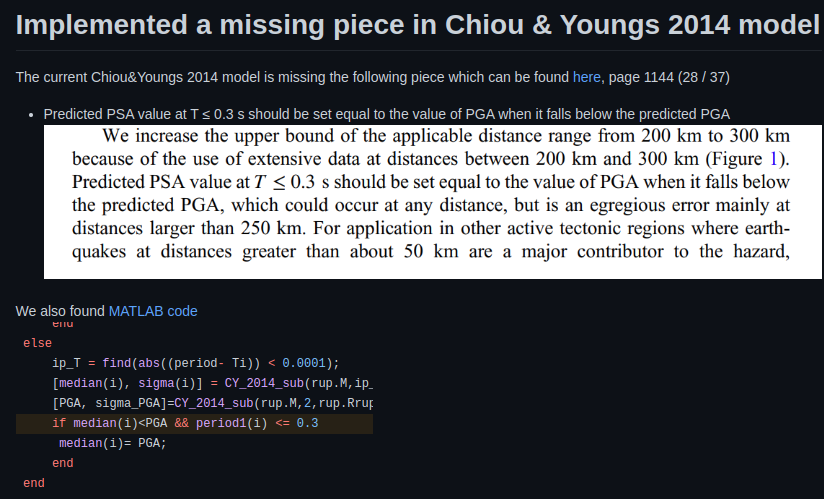

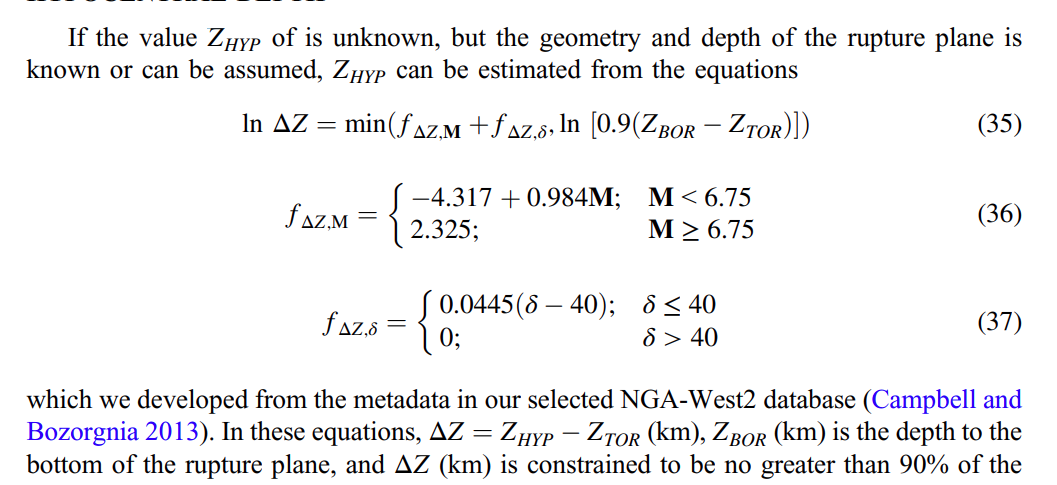

3. CY_14There was a missing piece within this model, and it is now fixed.

|

4. K_20 and K_20_NZKuehn's model makes some adjustments to the magnitude breakpoint, and this should only happen if the tectonic type is SUBDUCTION_INTERFACE and the region is either Japan or South America. However, the previous version of the K_20 applied this adjustment to every region if the tectonic type is SUBDUCTION_INTERFACE. More details can be found here. |

5. ZA_06ZA_06 officially supports periods between 0.05 and 5.0, but we'd like to compute between 0.01 and 10.0. Hence, we had to implement both interpolation and extrapolation. Unfortunately, we couldn't have the way OQ's interpolation. (It will include log(0), which is not valid.). Hence, we keep the interpolation and extrapolation from EE. |

Different COEFFS tables

I wrote a script that will compare the DS IMDB between the EE version and the OQ version, and I noticed the model BSSA_14 is the model that is returning utterly different in terms of mean values.

This is because the COEFFS tables were not identical between EE and OQ.

For instance,

# EE version

# -1 => PGV, 0 => PGA

periods = np.array([-1, 0, 0.01, 0.02, 0.03, 0.05, 0.075, 0.1])

c3 = np.array([-0.00344, -0.00809, -0.00809, -0.00807, -0.00834, -0.00982, -0.01058, -0.01020])

# OQ version

c3_2 = np.array([-0.003440, -0.008088, -0.008088, -0.008074, -0.008336, -0.009819, -0.010580, -0.010200])

(c3 == c3_2).all() # False |

Based on the paper(page 93 or 118/142 in viewer), the C3 matches EE's C3, not OQ's C3.

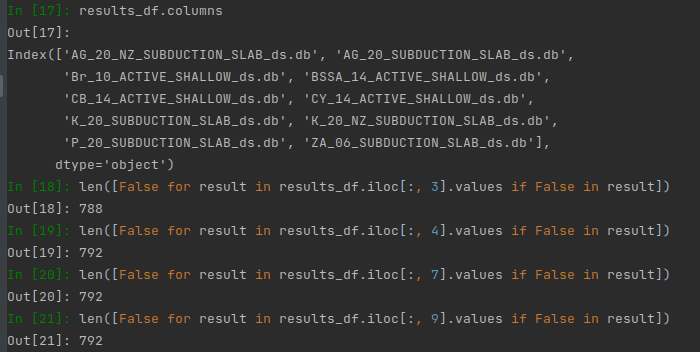

Comparison results - between calc_emp_ds and new_calc_emp_ds

The following models do not match(used np.isclose()):